Setup AWS MWAA in 7 Easy Steps

How to set up Airflow in a few mins vs hours or days.

If you have ever used Airflow, you know that it should exist in a cluster environment. Sure you can run it on one machine with a sequential scheduler, but you are not really taking advantage performance of multiple workers handling multiple if not hundreds of data pipelines. The major drawback of running Airflow as a cluster is setup and maintenance. Spinning up the cluster and then installing then configuring the cluster for each of your development environments is time-consuming. Don't forget about time needed for future upgrades or up/down changes to the cluster. Now replicate this for all your environments. These steps to get Airflow running does not add value to your data team.

As data engineers, we create value by building data pipelines, data processes, and data products for downstream consumers. The solution is to go towards the route of an auto-scaling managed service. That will allow us to create value for our teams and products instead of sinking time and money into tinkering with infrastructure.

In the last two years, cloud providers have been building out their managed services that include more data engineering-oriented frameworks and tools. Both GCP and AWS offer a managed Airflow solution. GCP offers Cloud Composer while AWS offers Managed Workflows for Apache Airflow (MWAA).

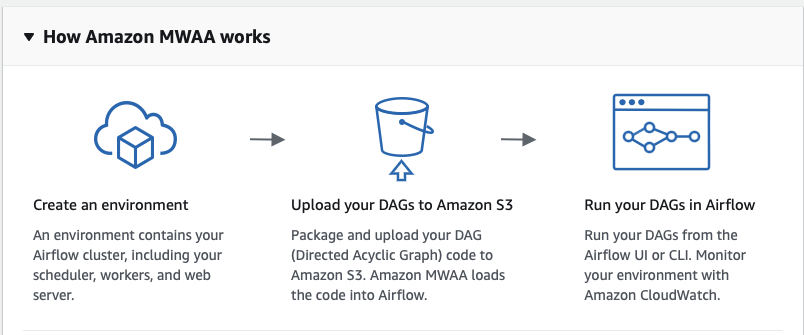

For this post, I will focus on MWAA. AWS will provision and manage the servers needed to run MWAA. I will provide a high-level overview of how to setup and use MWAA. I will assume you have some experience and knowledge about AWS and its services. If you do not know what terms such as security group or a VPC means, this overview might be too advanced for you. The great thing about using MWAA that all your Airflow code can reside in S3 bucket. MWAA will automatically sync with that bucket. This is great for your CD/CI process as it is only a simple file sync with the bucket. This is a simple diagram on how MWAA works.

Requirements

Spinning up an MWAA instance is fairly easy. Make sure you have the following ready before you start:

- Permissions to create roles, security groups and VPCs.

- S3 bucket for your Airflow files with a subdirectory for your dags such as 'dags'

- A VPC you want the MWAA to be in, MWAA will create one for you if you do not have one.

Step 1: Log into AWS

Search for the "Managed Workflows for Apache Airflow" from the AWS console.

Step 2: Create Environment

Select Create Environment

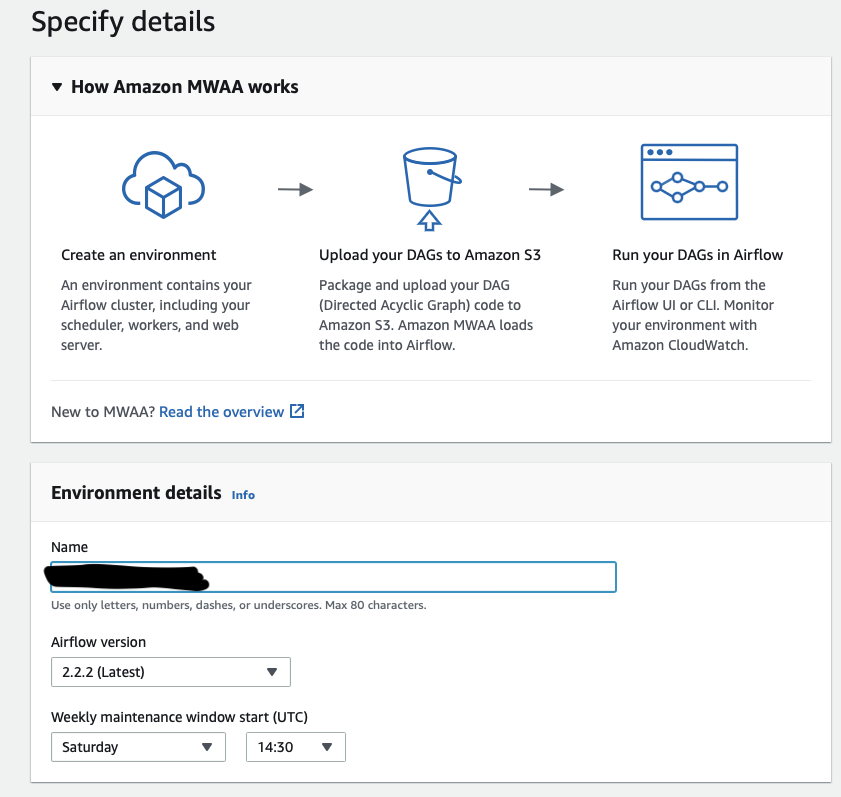

Step 3: Environment Details

Enter your enviroment name. For me, I just called it xxxx_dev to indicate a dev environment. Also select the Airflow version if you want to run a specific version.

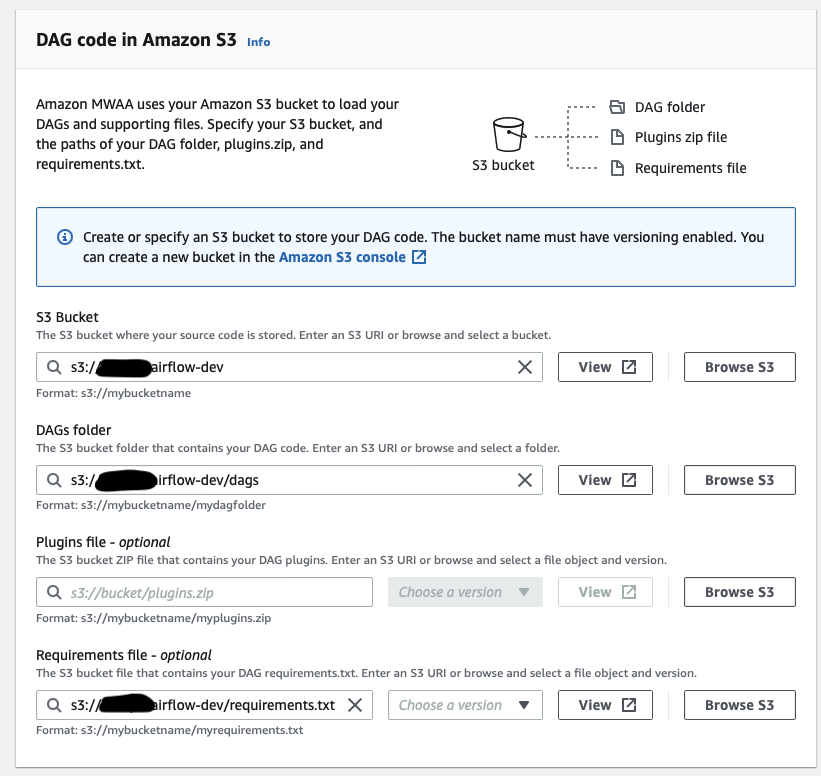

Step 4: S3 Buckets

Put in the path of your S3 bucket and the path for the DAGs. You can also input the path of your requirements.txt. This is not required. You can always add this in later. If you already have your DAGs and requirements ready, you can upload them to your s3 bucket now. Check out the link below on how to install dependencies or just to see what are the built in dependencies.

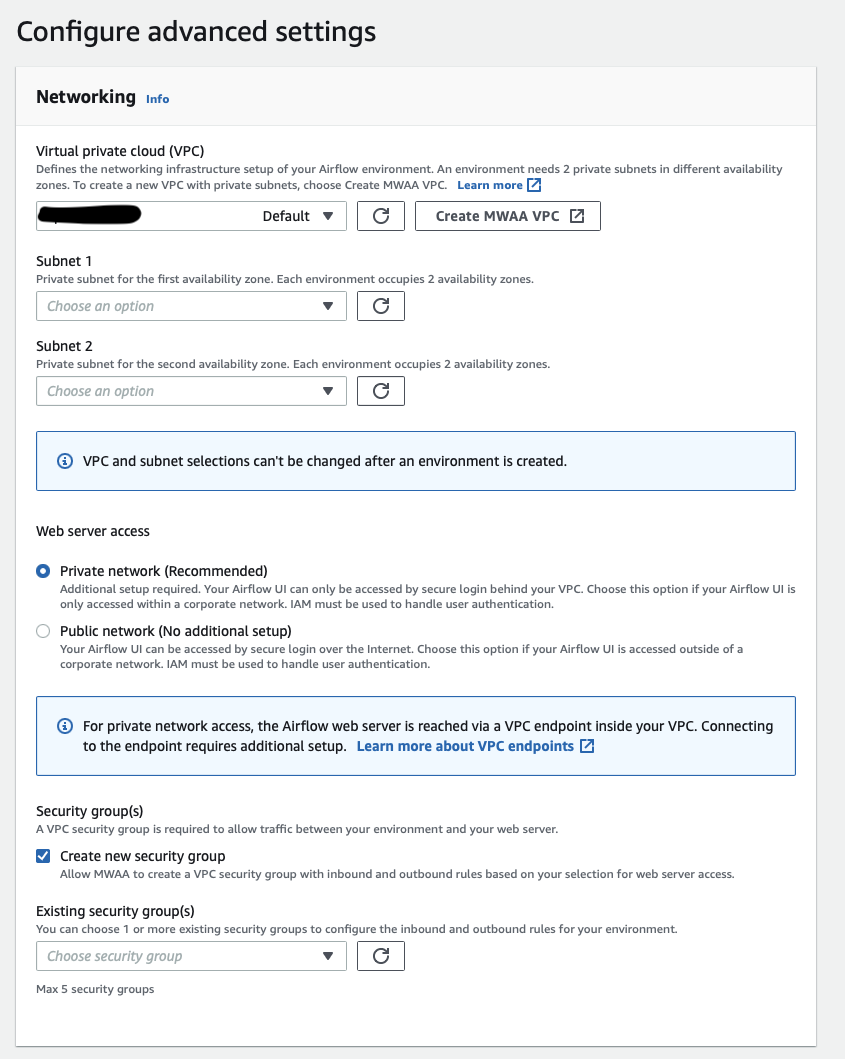

Step 5: Networking

In this step, you need to pick your VPC and your security group. If you did not have any existing ones, MWAA can create one for you. In terms of how you want to access your webserver, the UI to manage your Airflow jobs, there is Private or Public. Both require IAM to handle login. Pick the one that fits your needs. If you're not sure or you just want to test out MWAA, pick Public as you will not need to set extra connections to the VPC such as via a VPN.

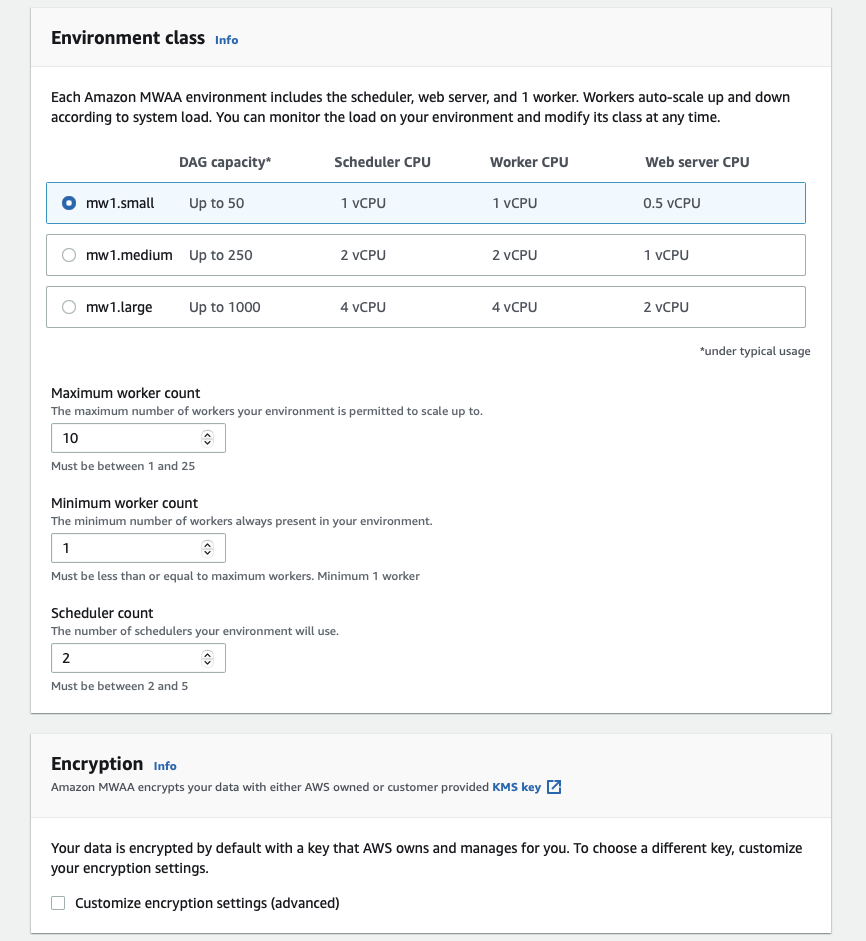

Step 6: Cluster Configuration

Configure your cluster. Based on the number of DAGs you plan to run, select the environment accordingly. If you're just testing MWAA, then select the lowest tier for 50 dags. You can also define the max or min number of workers for your Airflow cluster. If you're not sure or just testing out MWAA, you can leave it at the default values. Encryption, you can leave as is unless you have your own method you want to use.

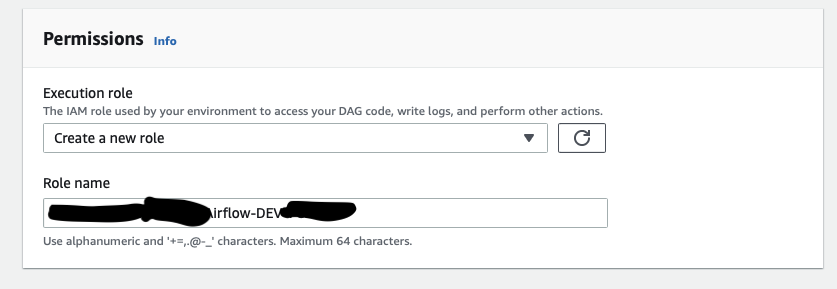

Step 7: Permissions

Last, is the permissions. Create a new execution role for MWAA. While you can use an existing role. I think it is more secure if this instance of MWAA is only tied to a role exclusively for this instance of MWAA. Logging you can leave as is unless you have a specific logging preference.

That is it! You just created a MWAA instance in a few mins! It will usually take 30-60 mins for the MWAA instance to be fully up and running. It will take a tad longer if you have a lot of dependencies.

Next Steps

- Upload a test DAG to your S3 bucket.

- Update your security policies to have access to S3, DBs or any other AWS services.

- If you already added your existing Airflow DAG code to the DAGs subdirectory in your S3 bucket, then update your connections on the Airflow webserver. You should be able to see your DAGs on the webserver UI now and you should be able to run your DAGs. If you have a complex directory structure for your modules from your old Airflow implementation, check out the resources below on how to restrcture your files and directories in your Airflow S3 bucket to be compatible with MWAA. If you're using an IDE, the imports should autocorrect itself as you move directories around.

Resources

Sample DAGs: https://docs.aws.amazon.com/mwaa/latest/userguide/sample-code.html

Installing dependencies: https://docs.aws.amazon.com/mwaa/latest/userguide/working-dags-dependencies.html#working-dags-dependencies-changed

Plug-ins and Modules: https://docs.aws.amazon.com/mwaa/latest/userguide/configuring-dag-import-plugins.html#configuring-dag-plugins-prereqs