Apache Airflow, Not Just for Data Pipelines

Alternate Use Cases

If you come from the world of data engineering or if your company ingests and processes large quantities of data on a regular interval basis, you are probably familiar with the Apache Airflow. But did you know there are more things you can do with Apache Airflow besides building robust data pipelines? In this post, I’ll go over a few things you can do with Airflow besides organizing and running your data pipeline tasks.

Before I get into the things we can do with Airflow beyond data pipelines, let’s go over what Airflow is. For those who are unfamiliar, Airflow is a popular platform for data engineers to set up ETL pipeline tasks that run on a schedule. While you do not need to use Airflow to run a scheduled task, cron is sufficient for scheduling tasks, Airflow allows you to create pipeline tasks with dependencies that cron does not.

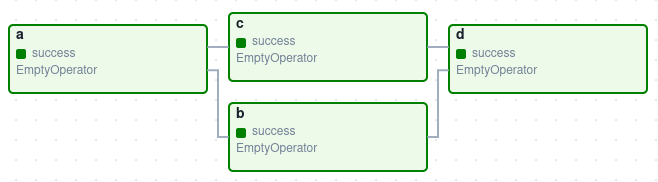

Directed Acyclic Graphs or DAGs, allows data engineers to programmatically and visually set up the data pipelines tasks that point to each other as dependencies. Computer Science topic alert: to learn more about what a dag is check out Wikipedia . DAGs, as a core feature of Airflow, allows data engineering teams to easily handle hundreds if not thousands of complex and long data pipelines easily. Airflow also allows you to monitor all these pipelines visually in real-time from Airflow’s web UI, unlike cron. See Fig 1 for a simple Airflow DAG:

Fig 1

Not all data-related tasks fall under the customary understanding of a data pipeline of ingesting, cleaning, processing, and loading into its destination. So what else can we do with Airflow beyond data pipelines?

- Data Maintenance: Sometimes you need to perform data maintenance to that data that is outside of normal data pipeline(s). For example, if your company has a 30 day processed data retention policy, you can set up a DAG that runs every day to remove processed data that is over 30 days.

- Report Generation: For example, while you can generate reports as a final task for any of your data pipelines, what if not all of these dags need reports. Adding and removing them from your DAGs is very time consuming and not all your dags run at the same time. You can time them all together with dependencies but then if one of the tasks fails, your DAG will fail and reports may never get produced. If you need daily reports from multiple data pipelines, you can set up a DAG that only outputs reports with the processed data as a dependency.

- Machine Learning Tasks: Machine learning tasks can be independent of the ETL data pipeline. A failure in your ETL tasks should not be a failure in your machine learning tasks. For example: Daily retraining of models. Snapshot generation to feed into models. These are not necessarily ETL tasks but are data oriented tasks that can be scheduled with dependencies.

- Everything else from System to DevOps-related tasks that need to be scheduled: Airflow can basically replace a lot of your cron work that has nothing to do with your data, data science or machine learning. Remember, the Airflow scheduler is a core component of Airflow. So basically anything you can schedule and run via a bash operator, you can perform with Airflow. You are not limited to Airflow’s operators or python. Anything you can run via bash, you can with Airflow. Not only can you set dependencies, you have a visual interface to monitor these tasks. For example setting up weekly tasks to clean up old logs files on your EC2 machine.